Speeding up Ŝako Detection with Amazons

A fast way to search a graph: Don't search it.

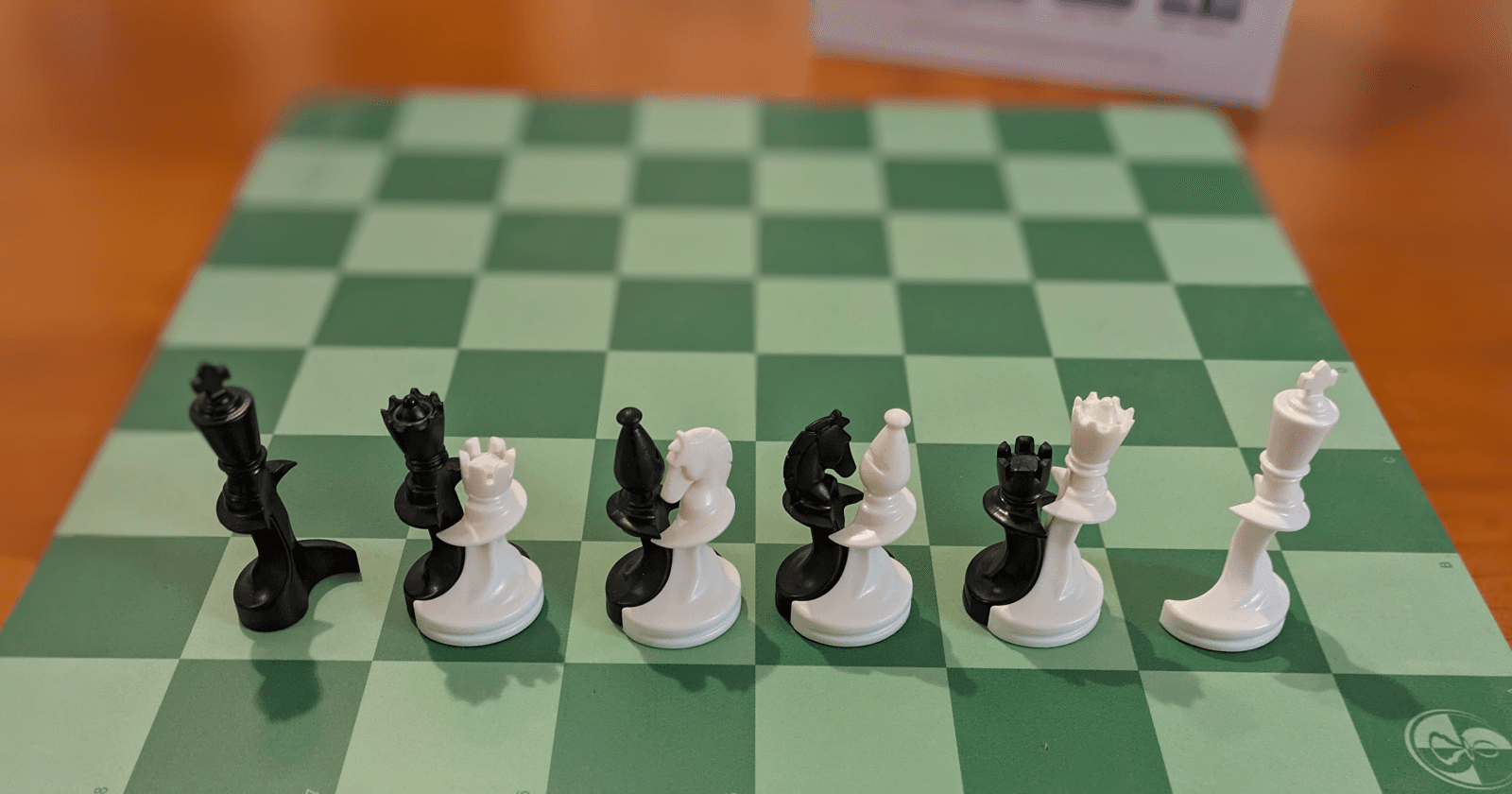

Paco Ŝako is a chess variant where figuring out if you are actually in check (or Ŝako as we call it) is pretty tricky. Pieces can't just move directly but can also free other pieces from unions which then starts a chain reaction.

So to figure out if you are currently in Ŝako you need to look at the graph of all possible actions in a chain. This is quite slow to do and we want to do this pretty often for some kinds of analysis, so I want to have a faster way to do it. For this, I designed the "Reverse Amazon Algorithm" to skip this search completely when this is possible to detect. What is the algorithm and why the name?

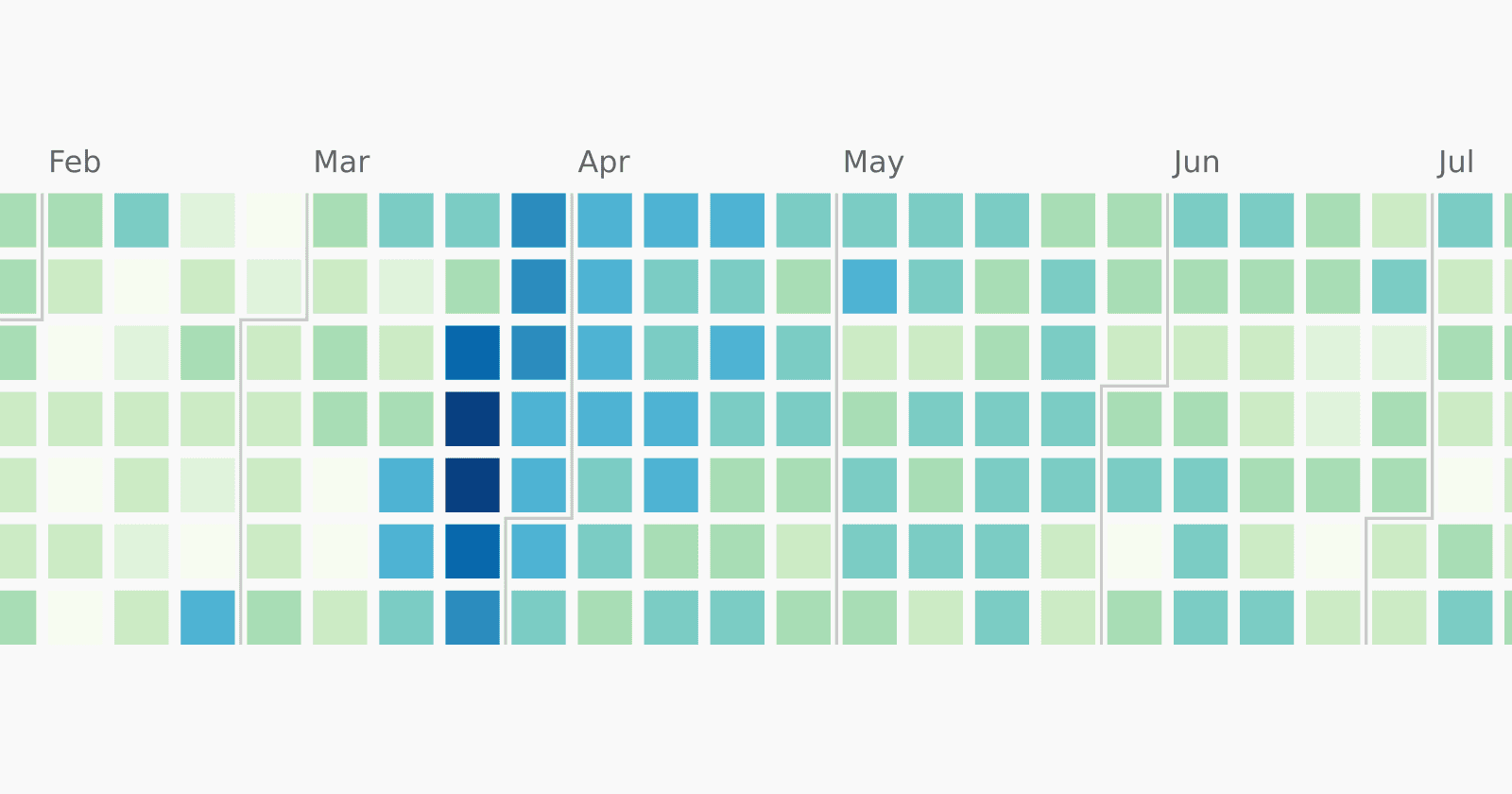

It is unrelated to online shopping and also unrelated to rivers. Instead, it refers to the Amazon fairy chess piece which can move like a queen and a knight combined. Pieces move symmetrically, so I can also start at the king and look "in reverse". Any square that is within "amazon reach" of the king is a potential threat to him. Here are the squares that we see with the first look:

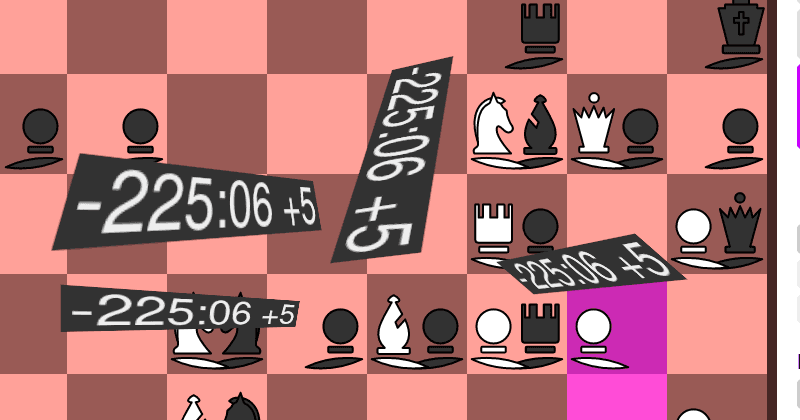

The squares which have either a pair on them or a single piece are the potential threats. We then focus on them and look at any squares that are within amazon reach of those. That is repeated until there are no more involved squares to check.

I can then run the graph search algorithm on a reduced graph. We only consider involved free pieces for the start and we only chain into pairs that are also involved.

If there is no free piece involved, then there is nothing to start and we can skip the complete graph search. I believe this "skipping" is where we save most of the time.

How much faster are we?

I didn't include any benchmarks until now, so how much did this optimization help compared to just running a full graph search on every position? The benchmark here is "training data generated for an AI we are training". For me, this went from "11 samples per second" to "120 samples per second". My friend had a similar improvement: going from 30 to 260 on his better CPU. This is a big win and it already allowed us to train a first model "Ludwig" that significantly outperforms our first attempt "Luna" which was a non-learning AI.

Notes on what makes this more difficult

En passant

While there is no piece on the en passant square, it can still be involved in a chain. The simplest way to deal with this is to mark it as involved and consider it occupied by a pair. This is pretty generous and we could likely reduce the search space by being more deliberate in how we do this. But the en passant is too rare to be worth more optimization here.

Rays through resting pieces

Since the chain is always started by lifting a free piece and starting to move it, this frees a piece. We'll later be able to move across this free space. So when we follow the queen rays to find involved squares, we need to look through one free piece at the piece behind that. If it is a pair, it is noted as involved as well.

This provides an opportunity for second-level optimization. After the first iteration of the reverse amazon algorithm, we'll know resting pieces that are not involved so we know that rays can't move through them. We can rerun a slightly modified algorithm where we don't look through the uninvolved resting pieces which potentially reduces the search space for the graph search, maybe even eliminating it.

More optimization opportunities

The second-level optimization also works in other ways. If we see e.g. that there are no involved knights, we can rerun the reverse amazon search with a restricted move set. This gives us a chance to reduce the set of involved pieces again and maybe run an even more restricted amazon search again. Right now no second-order optimizations are implemented. If you are interested in exploring this yourself, have a look at the code on GitHub!

A reverse amazon search should also be used to determine if castling is legal but I have not upgraded the castling code yet. That part is definitely on my to-do list and I'll keep a ticket open until I get around to it.