Optimizing Paco Ŝako with Flamegraphs

We'll use flamegraphs together with the regression test suite we build in the last article to find bottlenecks in my implementation of the Paco Ŝako game that is powering pacoplay.com.

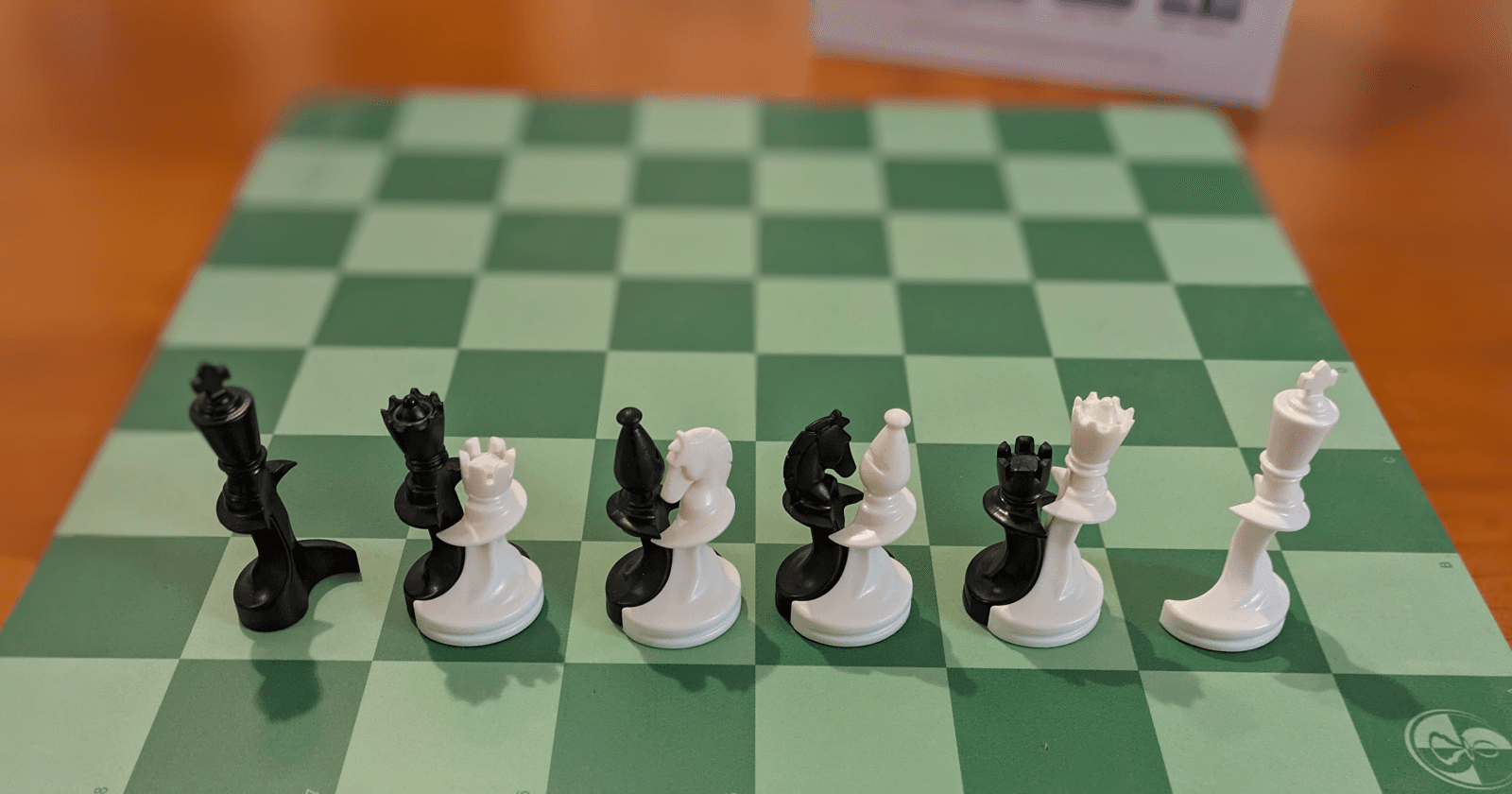

Paco Ŝako is a chess variant where you can't kill any pieces. Everything stays on the board and the game gradually evolves into an ever more complex web of possibilities.

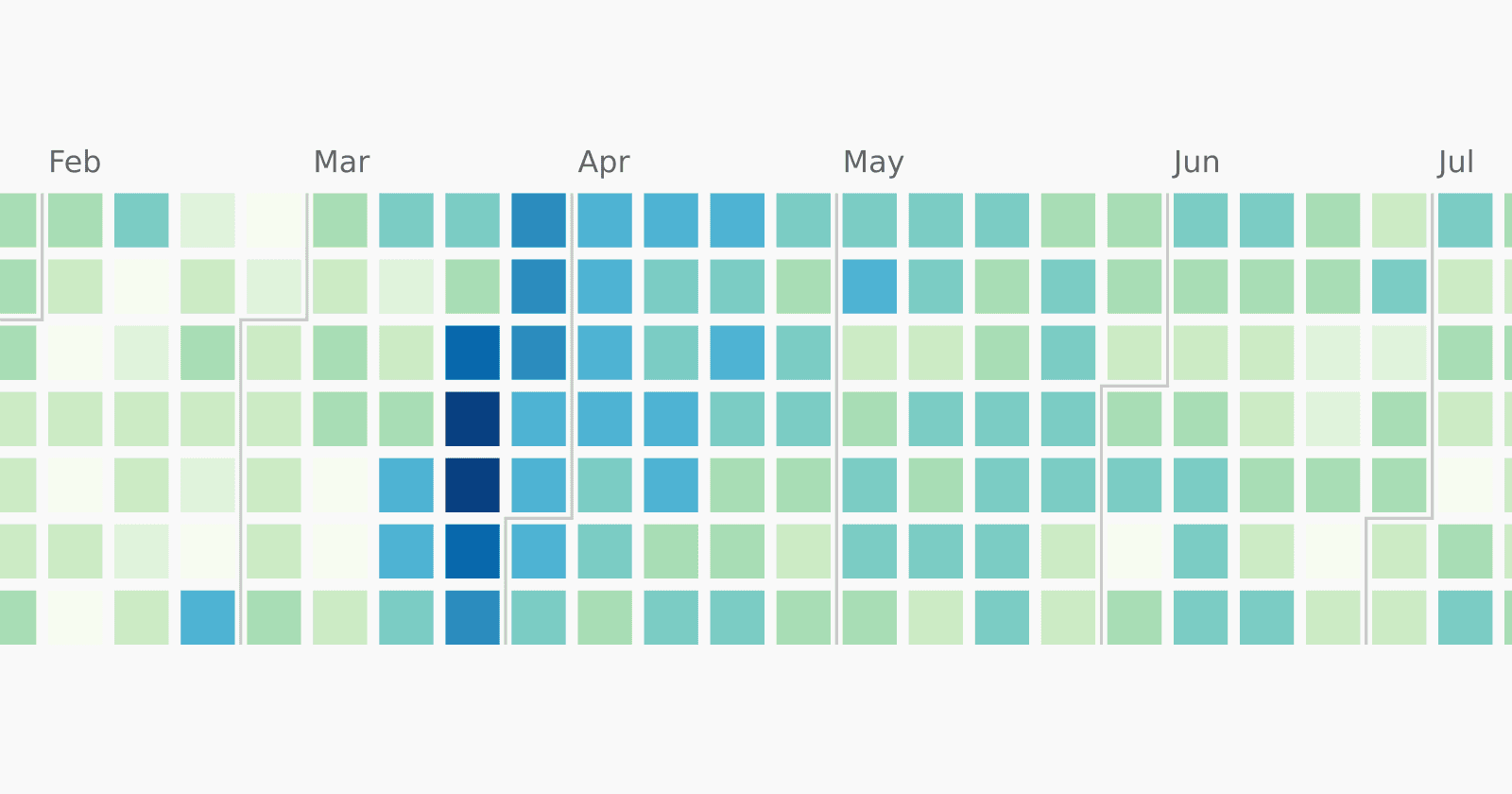

Right now, asking the game engine to evaluate all 3500 games that were played takes 5.2 seconds on my machine using a single core. I installed cargo flamegraph to get an idea what it is spending it's time on. After a bit of fiddling with the parameters, I arrived at this:

> cargo flamegraph --test validate_all_played_games

running 2 tests

test build_regression_file ... ignored

test regression_run ... ok

test result: ok. 1 passed; 0 failed; 1 ignored; 0 measured;

0 filtered out; finished in 5.29s

[ perf record: Woken up 172 times to write data ]

[ perf record: Captured and wrote 42,916 MB perf.data (5274 samples) ]

writing flamegraph to "flamegraph.svg"

That's colorful, but what does it actually tell us? Let's zoom in!

Flamegraphs are upside down. So the outer most method we are interested in is regression_run at the bottom which is the integration test that got executed by our integration test. It spends a large chunk of its time in actions to find out which moves are legal. Most of that time goes to pieces_that_can_be_lifted where we figure out which pieces are allowed to start a move.

Now this is surprising, because shouldn't that be a trivial operation? You can lift all the pieces of your color, right? That used to be right, but at some point I reduced the ways to get stuck with a board and needing to rollback. Seems like this was initially triggered by the AI project, but it also makes playing online a lot nicer.

This is also the change that broke games 218 and 219 for the regression test. We had to exclude those from the data set in the last article.

/// To help the AIs a bit, we are not allowing it to lift any pieces

/// that get stuck instantly. They need to have at least one

/// position where they can be placed down again.

fn pieces_that_can_be_lifted(&self) -> Result<Vec<PacoAction>, PacoError> {

let mut result = vec![];

for p in self.active_positions() {

let is_pair = self.opponent_present(p);

let piece = *self.active_pieces().get(p.0 as usize).unwrap();

if let Some(piece) = piece {

let targets = self.place_targets(p, piece, is_pair)?;

if !targets.is_empty() {

result.push(PacoAction::Lift(p));

}

}

}

Ok(result)

}

Now that could probably use some improvements in method names and helper function availability... The gist is, that we iterate over all our pieces and find out some information about them (where are they, are they dancing, is it a pawn, rook, bishop, ..?). Then we ask the place_targets method if there is any way to put this piece down again.

This already smells quite inefficient, because we are constructing a vector of all possible positions where we can put the piece down when we really only want to know if a single target exists. Looking a step deeper, it is the place_targets_king which eats most of the time in determine_all_threats. What is that?

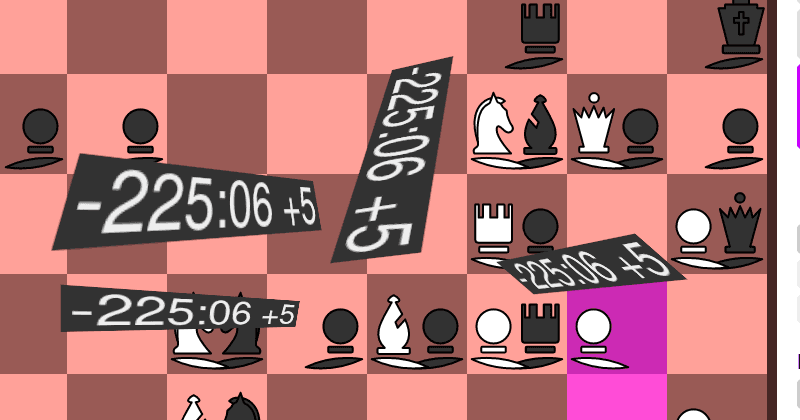

As in regular chess, you can only castle with king and rook if none of the squares the king would pass over are threatened by the opponent. (Including start and end square.) While in regular chess this is relatively simple, in Paco Ŝako it is not immediately obvious which pieces can threaten a square. This is because when a free piece is placed on a dancing couple your first piece enters the dance and the second one leaves, giving you essentially an extra move.

Here we have a short video of a chain where the king gets captured. There are several pieces involved and the knight even loop around to give the pawn an extra move.

Essentially this means we need to search a directed graph of game states (possibly containing infinite loops) to see if castling is even possible.

Luckily we don't need to optimize the graph algorithm yet. Whenever the king is able to castle, it is also able to move just a single square. Since we only care if any movement is possible at all, we can introduce a special case for the king:

fn pieces_that_can_be_lifted(&self) -> Result<Vec<PacoAction>, PacoError> {

let mut result = vec![];

for p in self.active_positions() {

let is_pair = self.opponent_present(p);

let piece = *self.active_pieces().get(p.0 as usize).unwrap();

if let Some(piece) = piece {

// For the King we need a special case, otherwise we would be

// checking castling options which is expensive.

// Since a castling option implies a move option, there is no

// need to check for castling options.

if piece == PieceType::King {

let targets = self.place_targets_king_without_castling(p);

if !targets.is_empty() {

result.push(PacoAction::Lift(p));

}

} else {

let targets = self.place_targets(p, piece, is_pair)?;

if !targets.is_empty() {

result.push(PacoAction::Lift(p));

}

}

}

}

Ok(result)

}

The king always has the same type King and never is in a pair which cuts down on variables we need to pass into place_targets_king_without_castling.

Let's make another flamegraph to see if we improved!

Well, that tells me precisely nothing :-(

Flamegraphs are a great tool at telling you where you spend time and where you should look for optimizations. But they can't really tell you how you improved because they are all relative.

But we do have the absolute time it took to run the regression test suite:

> cargo flamegraph --test validate_all_played_games

running 2 tests

test build_regression_file ... ignored

test regression_run ... ok

test result: ok. 1 passed; 0 failed; 1 ignored; 0 measured;

0 filtered out; finished in 1.53s

[ perf record: Woken up 51 times to write data ]

[ perf record: Captured and wrote 12,744 MB perf.data (1526 samples) ]

writing flamegraph to "flamegraph.svg"

With only 1.53 seconds instead of 5.29 seconds as before, we are more than 3 times faster!

So where do we go next?

Zooming in again, we see that determine_all_threats still consumes most of the time. But now it mostly gets called from place_targets where we figure out where to place the current piece. That is where we expect it to be called, thought it still surprises me that it takes such a big percentage.

Most of this this time seems to be spend inside HashMap and indeed we have a set where we store all states we have ever seen. We need that information because otherwise we could get stuck in infinite loops.

For chess board there is a technique called Zobrist hashing which stores the hash value of a board and incrementally updates it whenever a move is made. That should save almost all the computation required for this. I'll look this as a way to optimize this further in a follow up article.

Also, I just glanced over the "directed graph of game states containing infinite loops" that we need to search if we want to see if castling is even possible. That should warrant a small article on its own.