Snapshot Testing the Paco Ŝako Game

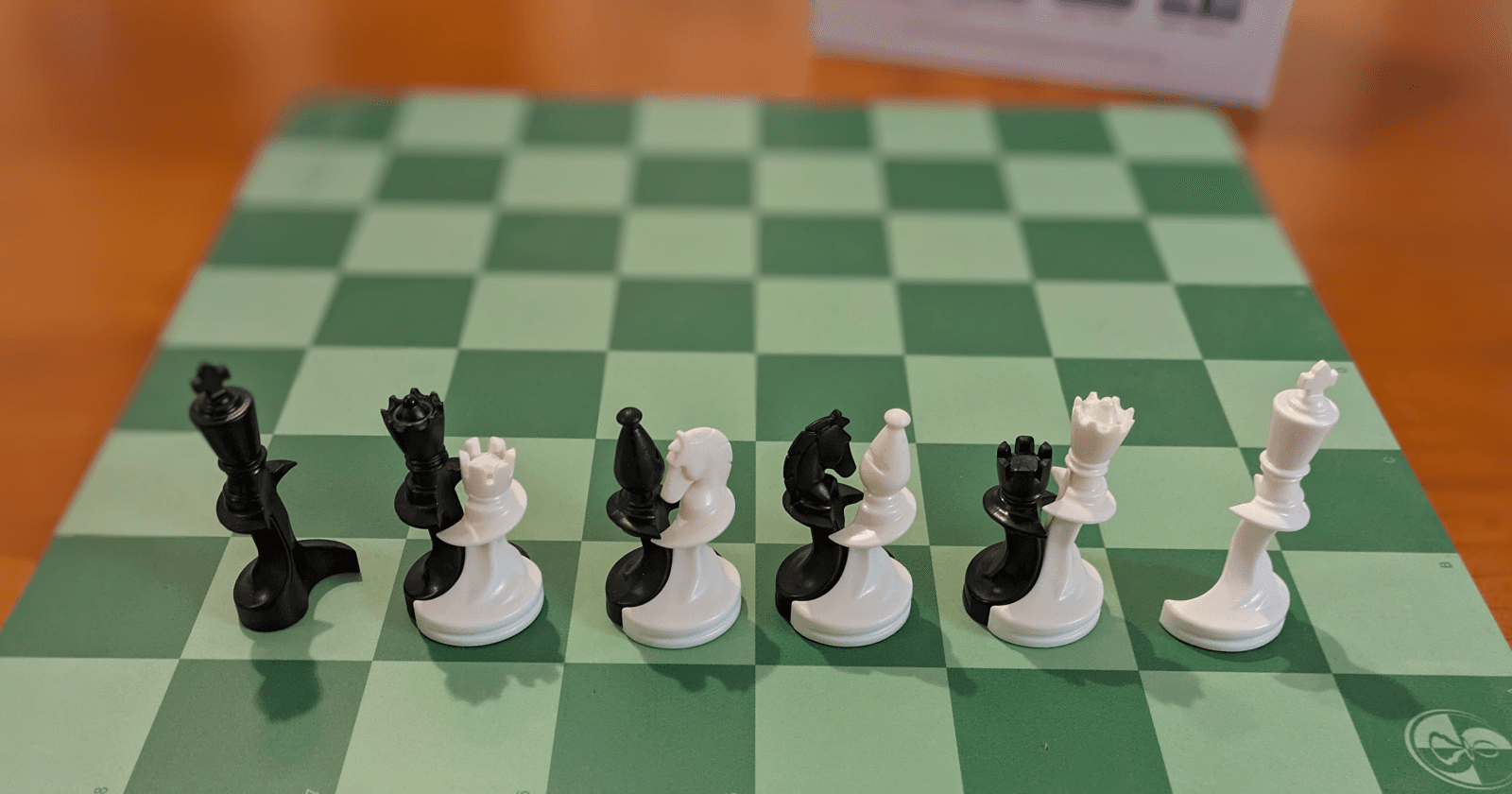

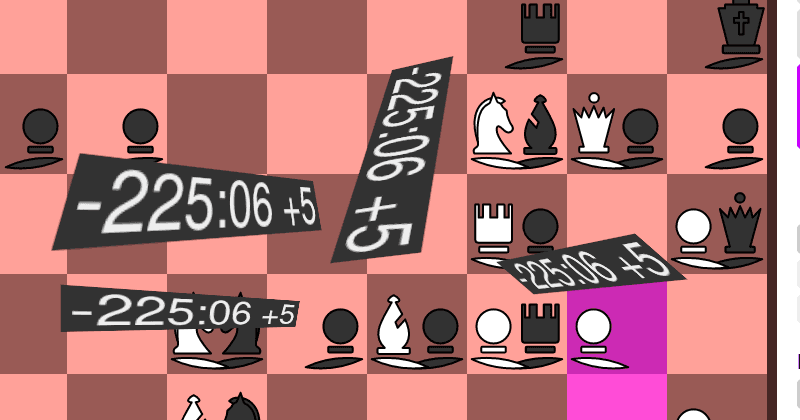

Paco Ŝako is a chess variant where you can't kill any pieces. It's a growing mess of a dance floor where the goal is to dance with the opponents king. And I wrote an implementation of it.

It's not a great implementation, mind you. I have no idea how to properly build a chess engine. But it seems to be mostly bug free and has been powering the PacoPlay website for a while now.

I'd like to clean the game implementation up a bit and add some variants like PacoŜako960 to it. There are already a few dozens tests to cover all the rules - but I would like to get some extra confidence that I'm not spoiling the fun for our little community or breaking Felix Monday evening stream. Luckily, we have already played a few thousand games, maybe I can leverage that?

There is a way, and it's called Snapshot Testing, or Output comparison testing as Wikipedia suggests instead. I'll also be calling it Regression Testing here which is what I use in the code as well.

Getting the Game Data

I started by getting a copy of the production database and asking it for all games with at least one move. That whittled it down from 4108 to 3552 games already. I then asked Sqlite3 to please glue all of that together into one big json list.

select json_group_array(json_object('id', id, 'history', action_history))

from game where action_history != '[]';

{"id":1,"history":"[

{\"Lift\":12,\"timestamp\":\"2020-12-06T16:27:52.277541068Z\"},

{\"Place\":28,\"timestamp\":\"2020-12-06T16:27:52.934204496Z\"}, ...

We can see that the first ever game on PacoPlay was played in early December 2020, but we don't really care about that for validating that the engine still works. A bit of search and replace later and I got it in a better shape:

[{"id":1,"history":[{"Lift":12},{"Place":28},{"Lift":52},{"Place":36},{"Lift":11},{"Place":27},{"Lift":57},{"Place":42},{"Lift":5},{"Place":33},{"Lift":62},{"Place":45},{"Lift":6},{"Place":21},{"Lift":51},{"Place":43},{"Lift":10},{"Place":18},{"Lift":58},{"Place":37},{"Lift":4},{"Place":6},{"Lift":59},{"Place":51},{"Lift":27},{"Place":35},{"Lift":48},{"Place":40},{"Lift":9},{"Place":25},{"Lift":45},{"Place":28},{"Lift":33},{"Place":42},{"Lift":42},{"Place":27},{"Lift":18},{"Place":27},{"Place":34},{"Lift":43},{"Place":34},{"Lift":21},{"Place":38},{"Lift":36},{"Place":27},{"Place":12},{"Lift":6},{"Place":7},{"Lift":37},{"Place":28},{"Place":22},{"Lift":3},{"Place":27},{"Place":34},{"Place":52},{"Lift":22},{"Place":7}]}]

I'm storing moves as a "Lift" together with a separate "Place", because Paco Ŝako can have long move chains where the first placing frees a second piece which frees a third piece which frees ... But really, the content doesn't matter. I now have something that the engine accepts as input. I'll be able to read that in the test and then generate all the legal moves to verify that they didn't change.

Turning it into a Test

I'd like this to be separate from the other library tests, as this code gets a bit longer. Rust allows you to define "integration tests" which live outside your main code in the tests directory. Both resulting files are in the commit on GitHub.

In here, I have two tests:

- One "test" to generate the regression suite from the manually prepared input file. That one is not really a test though, so I'll need to put it on ignore. It's the "build suite" arrow from the diagram.

- A second real test to verify that logic still does what it is supposed to do.

Building the Regression Suite

#[derive(Deserialize, Clone)]

struct RegressionInput {

id: usize,

history: Vec<PacoAction>,

}

With serde_json I can quickly get the file content into a Vec<RegressionInput>. I then step through all the moves that were done on the board and record the legal moves in each step. That will give me a Vec<RegressionValidation>:

#[derive(Deserialize, Serialize, PartialEq, Eq, Debug)]

struct RegressionValidation {

id: usize,

history: Vec<PacoAction>,

legal_moves: Vec<Vec<PacoAction>>,

}

I got some errors on the first try, turns out that games 218 and 219 were actually in an illegal state because I changed the implementation at some point after that. But I can just filter them out and the rest of the games processed cleanly.

#[ignore = "This is not a real test, but rather the utility

used to build the regression database"]

#[test]

fn build_regression_file() {

let input: Vec<RegressionInput> =

load_game_database("tests/all_non_empty_games.json");

// Remove games where the engine now does something else.

let input: Vec<RegressionInput> = input

.iter()

.filter(|data| !FILTERED_OUT.contains(&data.id))

.cloned()

.collect();

// Map each input to an output given the current logic

let output: Vec<RegressionValidation> = input

.into_iter()

.map(map_input_to_validation)

.collect::<Vec<_>>();

// Write the output to a file

write_regression_database(output, "tests/regression_database.json");

}

Here map_input_to_validation is the method that steps through the moves that were done.

Running the Regression Suite

Now we have a suite, we need to actually run it. Turns out all the pieces for this are already readily available:

#[test]

fn regression_run() {

let games: Vec<RegressionValidation> = load_regression_database();

for game in games {

let input = RegressionInput {

id: game.id,

history: game.history.clone(),

};

let recomputed_game = map_input_to_validation(input);

assert_eq!(game, recomputed_game);

}

}

When we now ask cargo to run the test, we are left waiting for quite a while. At least we get an ok after 1.5 minutes:

Running tests/validate_all_played_games.rs

running 1 test

test regression_run ... ok

test result: ok. 1 passed; 0 failed; 0 ignored; 0 measured;

1 filtered out; finished in 90.22s

Let's try it again with --release:

Running tests/validate_all_played_games.rs

running 1 test

test regression_run ... ok

test result: ok. 1 passed; 0 failed; 0 ignored; 0 measured;

1 filtered out; finished in 5.29s

Much better! I'll be able to change the implementation of the core mechanics now and still sleep sound :-)

What's Next?

I ran some more analysis and found that ten (<3%) of the games take more than 50% of the time. I excluded them for now, because the unit tests don't run with the --release flag in CI. I'll certainly need to dig into those some more.

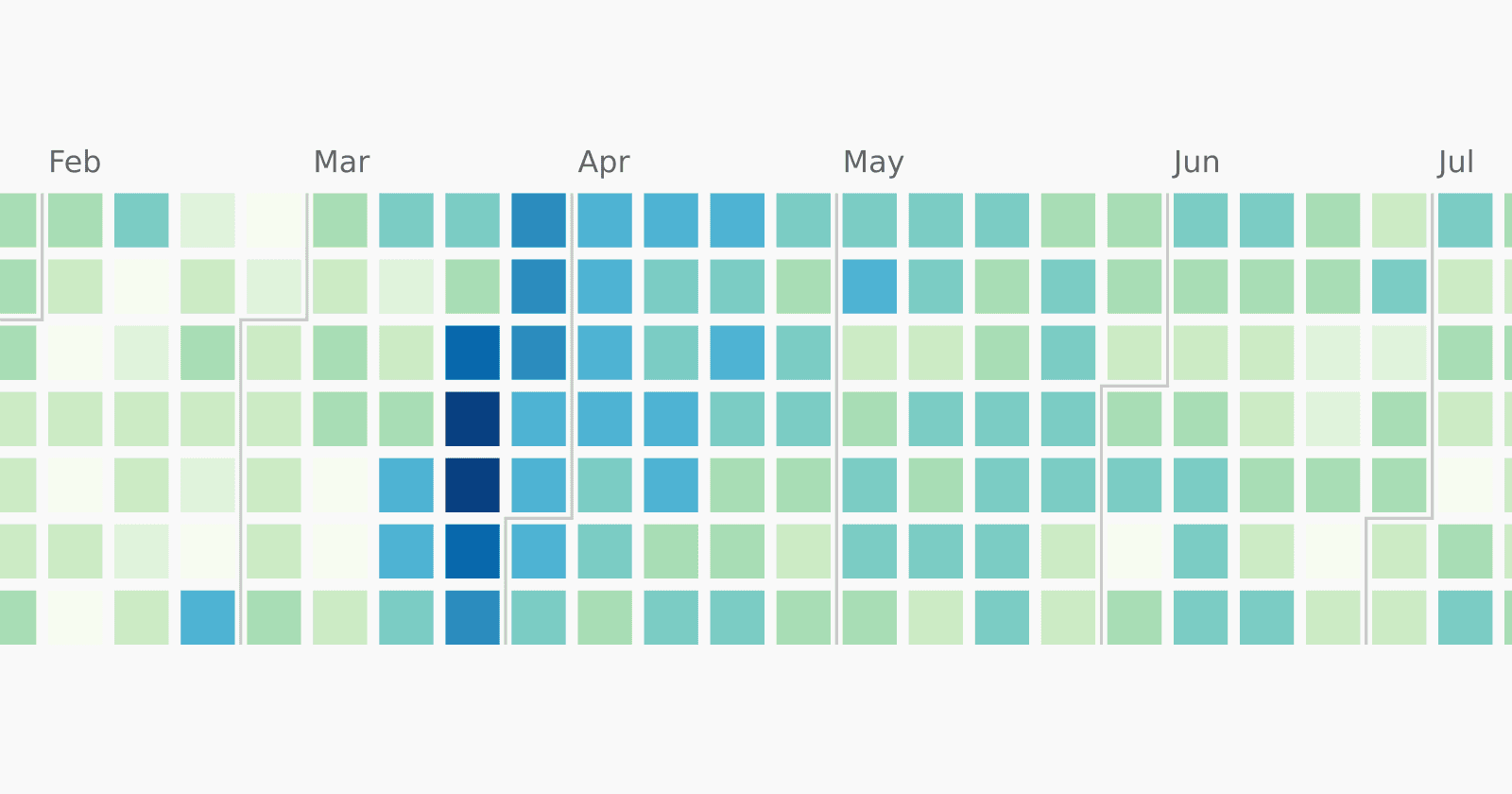

I'd love to show you some graphics of the runtime distribution, but my skills at that have somewhat degraded after leaving university.

The regression test also gives me a good performance benchmark. I'll use that to figure out which places of the move generator are terribly slow. I'm hoping to write the next blog posts about some optimizations.